Every blockchain follows the same rule: validators must verify that transactions were executed correctly.

The most straightforward way to enforce this is to re-execute the computation. Validators replay all transactions in a block to verify the resulting state transition. This guarantees correctness, but it does not scale.

Now consider a concrete example. Suppose the Parasol engine has just processed a large bunch of transactions and needs to convince the Solana validators that the resulting state is correct. Sending all transaction data and asking validators to re-run everything would work, but it would be costly and time-consuming.

Proof systems offer a more efficient solution: an untrusted prover performs the computation, and produces a proof for a given statement, while a verifier checks that proof efficiently. In our example:

- The prover is Parasol.

- The verifiers are the Solana validators.

- The statement is the correctness of the transactions.

Crucially, the proof can be far smaller than the raw data, and verifying it can be much cheaper than re-executing the full workload.

This blog post focuses on a type of proof systems called SNARKs, preferred for their nice properties, and explores how we typically build them. As the goal is conceptual clarity, some simplifications are made.

SNARKs

A proof system could be interactive, if it requires an exchange of messages, in a prescribed order, between prover and verifier, or non-interactive, meaning that the proof consists of a single message from the prover, i.e. the verifier is not involved in the proof generation process.

In our application, we want to submit proofs to Solana, and have multiple validators check them at will. Thus, we will need a non-interactive proof system.

At a high level, a SNARK is simply proof system that produces small proofs, has a fast verification procedure, and is non-interactive.

Along with efficiency, we also want to make sure that our proof system is secure and expressive.

- Secure means that a proof will only be accepted if the underlying statement is true. In our application, an insecure proof system would allow incorrect transactions to be processed.

- Expressive means that the proof system supports a wide range of possible statements. This is crucial to be able to express complex logic, like the correctness of a transaction, in a language that the proof system can understand.

The Modular design of SNARKs

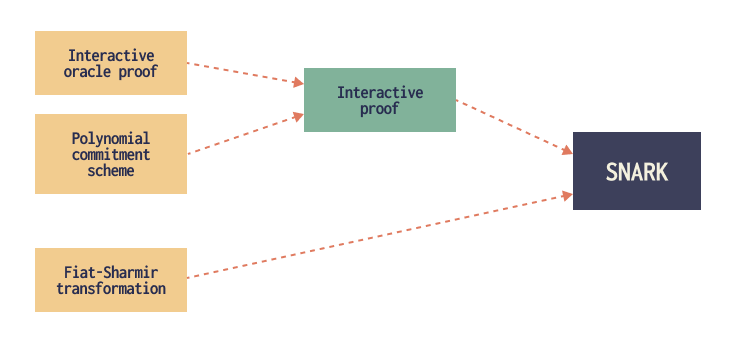

While it was not always the case, in recent years, the cryptographic community has settled on a modular approach for designing SNARKs. It is based around three key components:

- An interactive oracle proof (IOP). This is the backbone of the SNARK, and the piece that determines the expressiveness of the construction. It also influences the proof generation cost.

- A polynomial commitment scheme (PCS), which complements the IOP, and has a big impact on the overall efficiency, particularly on the proof size and verification cost.

- The Fiat–Shamir transformation, which wraps the other two components to produce a SNARK.

Below, we go into a bit more detail about each of these components.

1. Interactive oracle proofs

As discussed above, we could just send the raw transaction data to the verifier, and let them check by themselves, but this data takes a lot of space to send, and a lot of time to verify.

A better alternative is the following. First, the data is processed in a way that even if there is a single transaction among many that is not correct, the error will propagate through most of the data, and so it will be easy to spot.

Then, the verifier just picks small pieces at random, and see if they check out. Very informally, the verifier goes: "Okay, show me this part. Hmm, this seems correct. Now show me this other part of the data. Alright, now I want to see that other one." After spot-checking a few places and not finding any errors, the verifier considers themselves satisfied, and accepts the proof. The intuition is that, because the error has been amplified and checks are random, the verifier has a very high probability of catching any errors, even if they do not check the whole thing.

An interactive oracle proof (IOP) is an abstraction of an interactive proof system, which captures the idea that the prover's raw data is available "on demand", but the prover never shares the full transaction data, only small pieces. This is modeled as the verifier querying an oracle for small pieces of the transaction data, hence the name.

The IOP model allows us to:

- Harness the power of interaction, by giving the verifier the power to "ask the right questions" about the data.

- Abstract away the problem of reading data on the verifier's side, and reason about how to build a good proof system assuming that this will not be a problem.

It is important to realize that, by itself, this IOP notion is just an ideal model, not a real-world construction. The prover cannot send the data in full because, as discussed, that would not be efficient. But if the prover just reveals whatever pieces the verifier requests when they request them, the verifier has no way of knowing that these pieces are consistent with each other, and they are not being made up on the spot to answer the verifier's queries.

2. Polynomial commitments

A polynomial commitment scheme allows us to take an IOP and compile it into an actual real world scheme, by replacing the ideal oracle by a real cryptographic construction.

To see how it achieves this goal, we need to talk a bit about data representation. The proof system will represent all the transaction data as a small number of large-degree polynomials. You can think of as an encoding of a large amount of data, which is particularly amenable to proof systems.

Moreover, parts of the data can be retrieved by evaluating the polynomial at certain points. So in this language, the verifier questions become evaluation requests at certain points.

A PCS works in two phases:

- First, it takes a large-degree polynomial, and produces a small commitment to it. A commitment is a small piece of data, much smaller than the data originally encoded, that the prover shares with the verifier at the beginning of the interaction.

- At any time, the verifier can ask for the evaluation of the polynomial at some point of their choice (i.e. retrieving some small piece of the transaction data). The PCS allows the prover to provide the evaluation, together with a small proof of correct evaluation.

The verifier can check this proof to be convinced that the prover's answer is consistent with the initial commitment. This is how we ensure consistency and "on demand" checking, without the need to send the raw data in advance.

3. The Fiat–Shamir transformation

At this point, we have gotten rid of any trusted or ideal components. However, one issue remains: this process of question and answer between verifier and prover means that we still have an interactive proof system, and we want a non-interactive one.

The Fiat–Shamir transformation is a generic transformation that can be applied to a wide variety of interactive proof systems. It yields a non-interactive proof system with expressiveness, security and efficiency characteristics very similar to those of the starting interactive proof system.

On a high level, it simulates the "questions" from the verifier, and thus performing the whole interaction inside the prover's head, and outputting the transcript of the interaction as a proof.

Crucially, this is done in a way in which the prover has absolutely no control over the questions generated. Otherwise, they could fool the system by directing the questions to the parts of the data which they know will pass verification, while ignoring those in which an inconsistency would be detected. Or, since the interaction happens in the prover's head, even exploiting the fact that they can answer questions out of order.

The Fiat–Shamir transformation works by by generating each question via hashing all the data used in the interaction so far. This ensures unpredictability of the questions, and enforces the correct order of the protocol.

An important remark is that the above is a big oversimplification of the situation. Whilst the Fiat–Shamir transformation is on the surface a very simple construction, and has been in use for decades, there are lots of subtleties, both on the theoretical and implementation level, that have led to vulnerabilities over the years. But this is a whole other topic.

Mixing and matching

There is a large number of interactive oracle proofs and polynomial commitment schemes in the cryptographic literature. Lots of new constructions have been developed in the last decade, with new and improved designs coming up all the time.

For example, Plonk was originally introduced as a SNARK which combined a novel IOP with the well-known KZG polynomial commitment scheme. Over time, the definition has evolved, and because the interesting contribution was the IOP, people have started to use the term Plonk to refer to just the IOP (or any of its multiple generalizations).

Another example are STARKs. While the terminology might suggest something else, a STARK is just one particular case of SNARK, which combines an IOP not so different from the one in Plonk with a FRI-based PCS. This results in slower verification, but faster prover than KZG.

These are just some common examples. Except for some mild restrictions, we can combine basically any IOP with any PCS, then apply the Fiat–Shamir transformation, and obtain a SNARK that inherits the qualities of its building blocks. This allows for different tradeoffs between expressiveness, security, efficiency of the prover and verifier, and proof size, among other properties. We can use this modularity to our advantage, choosing the best components for each particular application.

In upcoming articles, we will explore some of these constructions in more detail.

Closing thoughts

We have discussed some of the important qualities of a good SNARK, how they are inherited from two of their key components, the interactive oracle proof and the polynomial commitment scheme, and how these choices lead to different tradeoffs.

More recently, researchers and practitioners have been looking not at how to combine components of a SNARK, but how to recursively combine multiple SNARKs together. The goal is to side-step some of these tradeoffs, and get the best properties from each SNARK. But this is a topic for another day.

Follow Parasol for more on ZK research and our journey to perpify internet capital markets on Solana.